We are using the latest release and started to focus in on in the field testing of devices. We seem to have run into an issue with the ADR design and I feel like I’m missing a configuration.

Basically, we are using US902 and our nodes are capped at 20dBM. The only configurations that I’m aware of for ADR are the installation_margin (defaulted to 10 and unchanged in our system) and the min DR/max DR values.

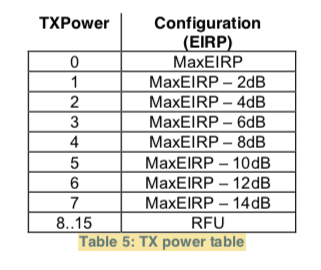

The problem is that the loop seems to unroll as adjust TX power levels first and foremost and then I guess SF. I’ve watched the server request our devices to try to go to 22, 24, 26, 28 dBM even though they don’t have this capability. As I’d expect when this happens you can watch the SNR just continue to go down because the nodes can’t adjust until eventually you start dropping packets. For some reason, I’ve never seen our system try to adjust SF to anything other then 7.

How does the ADR loop work? How do I tell the system that our nodes can’t go above 20 dBM? What is installation margin and when would you expect the server to signal a SF change instead of just TX power?

Thanks,

Patrick